JENNIFER – Kubernetes Environment (AKS, EKS, GKE,…) Supports

Evolution to the Container Environment

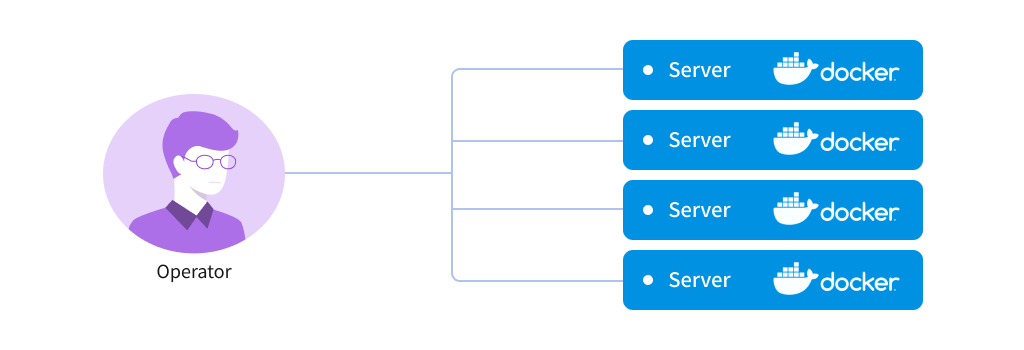

Container virtualization technology that provides virtualization enhanced to another level unlike the VM that virtualizes physical machines is now successfully being introduced in the operation environment in conjunction with the activated microservice. However, the container layer is provided in such a way to simply separate the execution environment from the operation environment, thus from the perspectives of direct operation, it can be achieved in small services only, and there are lots of difficulties in operating a large service in the real world. In other words, as shown in “Figure 1,” in case that an operator has to deal with the multiple containers, it is highly likely to lead to a managerial mistake.

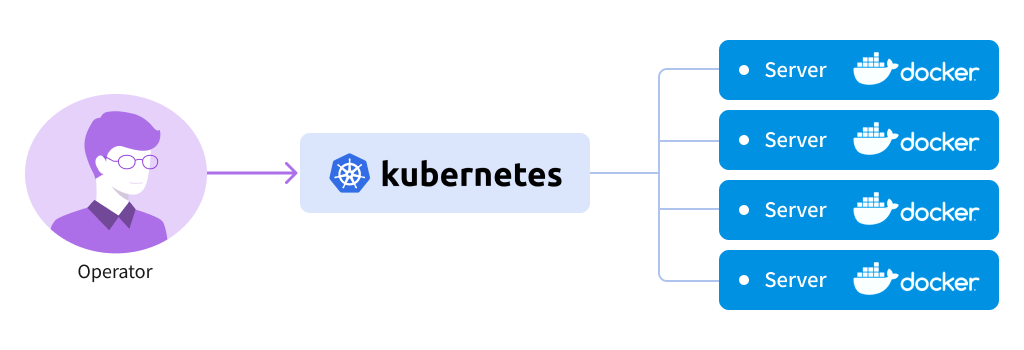

To supplement for this weakness, there are now orchestration tools that can abstract container management. Thus, the system operator, as shown in “Figure 2,” can use the orchestration tools to handle multiple containers and now, it is possible to facilitate introduction of container technologies for a large scale service.

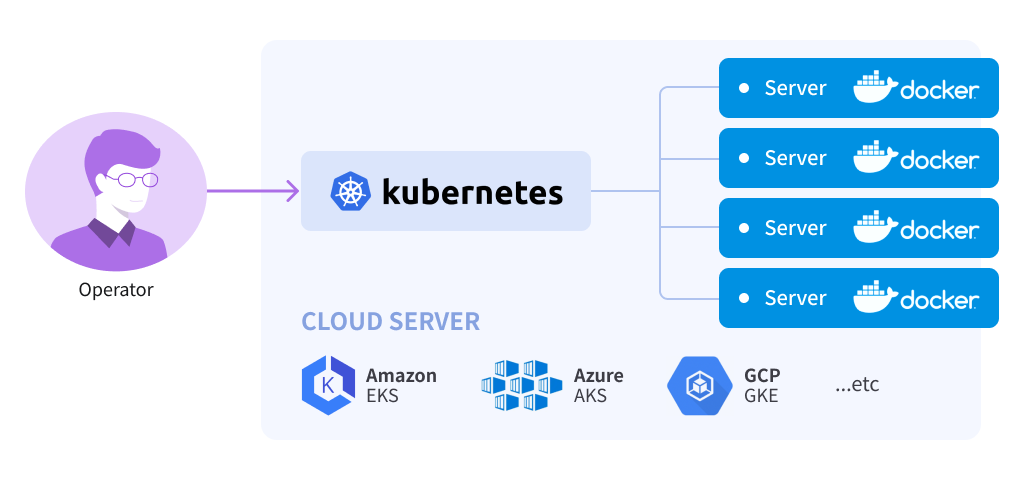

Those companies that provide cloud services are now making a quick move to introduce such changes in the operation environment to their product lines. Why? Because even if there are orchestration tools, eventually, we must have infrastructure that activates container technologies. Eventually, cloud service provider can provide perfectly abstract container services simply by placing the k8s service on top on the exiting virtual infrastructure environment, and this is well in line with the “infrastructure management cost reduction” that all the cloud services emphasize. At present, reflecting such a trend, there are many services in operation such as Azure’s AKS, GCP’s GKE, AWS’s EKS and so on.

JENNIFER Agents’ Supports for k8s

Note

We take the AKS enviroment for an example in this article, but from the perspectives of JENNIFER product, it it eventually about supporting k8s, so it can be supported in all other environments in a similar way

In addition, there are many different container technologies but here we will just focus on explaining dokers.

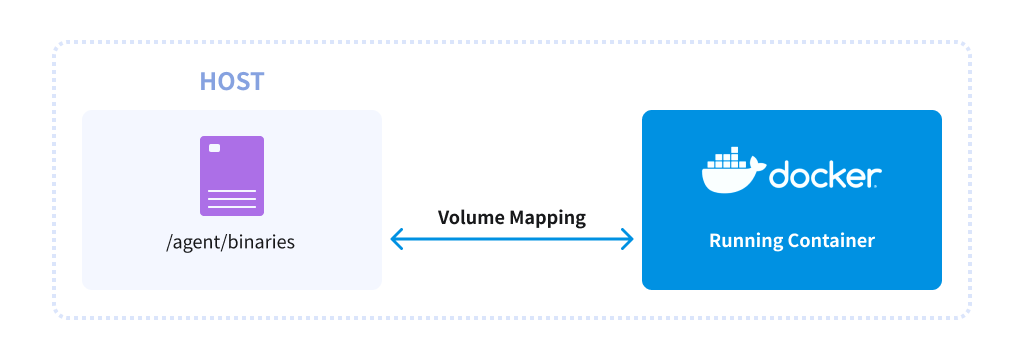

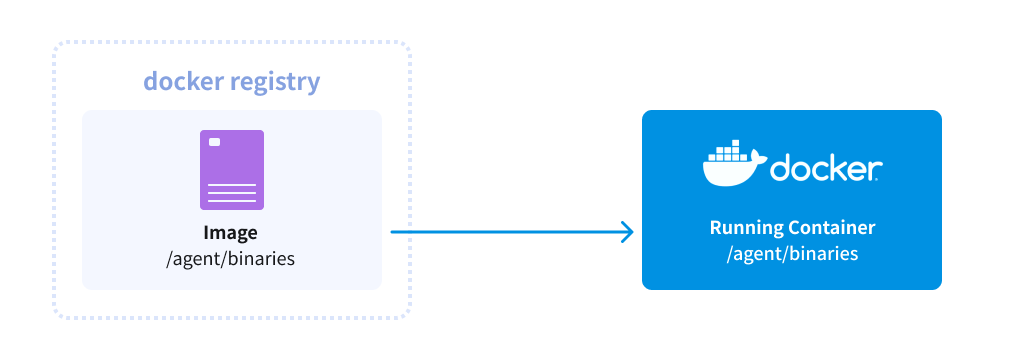

Basically, a Jennifer agent supports k8s in the same way that it supports the container environment. Eventually, we would have to notify the container about the execution file and environment variable settings and in the past, a Jennifer agent was activated in the docker environment in the following 2 ways.

- Designate the volume and the environment variable for the agent’s binary.

2. Build by including the agent binary and the environment variable in the existing container’s dockerfile

k8s orchestration tools involve distribution of pod units by wrapping a container at one level, thus the agent’s support for k8s is limited to within the above 2 principles. In practice, method 2 can be used as it is in the k8s environment, too.

However, unlike the existing structure directly connected to a container, due to the characteristics that the volume is connected to the pod that can be a group of container, it connects the agent’s binary and container through the PV (persistent volume) set by k8s.

Once the volume containing the agent’s binary is prepared, a user just has to add the volume connected to the PV and the env setting in the yaml file for the service distribution after that.

apiVersion: apps/v1

kind: Deployment

metadata:

name: net-razor31-sample

...[Omitted]...

spec:

...[Omitted]...

template:

...[Omitted]...

spec:

containers:

...[Omitted]...

env:

- name: CORECLR_ENABLE_PROFILING

value: "1"

- name: CORECLR_PROFILER

value: "{6C7CAF0F-D0E5-4274-A71B-6551761BBDC8}"

- name: CORECLR_PROFILER_PATH

value: "/netagent/bin/libAriesProfiler.so"

- name: ARIES_SERVER_ADDRESS

value: "127.0.0.1"

- name: ARIES_SERVER_PORT

value: "5000"

- name: ARIES_DOMAIN_ID

value: "1000"

volumeMounts:

- mountPath: "/netagent"

name: volume

volumes:

- name: volume

persistentVolumeClaim:

claimName: my-agent-file

After that, a user can use kubectl to apply yaml and then check that monitoring works properly.

$ kubectl apply -f demo.yaml

As advantages of the cloud platform, a user can freely add or delete abstract infrastructure based walker nodes to k8s. At the same time, a user can manually or automatically scale the number of pod instances in the app. K8s supports Horizontal Pod Autoscaling (hereinafter, hpa) for automatic scaling, and a user can use the same command in AKS, too. For example, if one instance is set and executed in the above “demo.yaml” distributed, and max 3 units are run depending on the load, then it can be run as follows.

$ kubectl autoscale deployment --max=3 net-razor31-sample --min=1 horizontalpodautoscaler.autoscaling/net-razor31-sample autoscaled $ kubectl get pod NAME READY STATUS RESTARTS AGE net-razor31-sample-6b747bb886-c2kgk 1/1 Running 0 116s

As seen here, even without the load, 1 pod is operated but there can be none in the Jennifer console. Why? Because there can be a difference between the uploaded pod and the request sent to the internal application.

Of course, if a load test is performed in this condition,

$ docker run --name loadtest --rm -it azch/loadtest http://...[Service URL]

3 pods will be automatically uploaded and a user can check the

results in the Jennifer console.